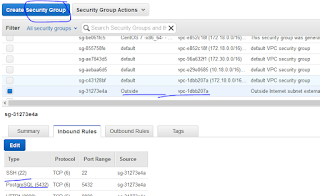

Go VPC check sec grop and acls

Go EC2 check sec group and acls

to allow traffic inbound and outbound for source (ex 0.0.0.0/0)

========================

For static public ip issue

Install pritunl in Bastian server

---

Install client ur ansible server like this

yum install openvpn

systemctl enable openvpn@server

vim /etc/openvpn/server.conf (create this file and add private ip and all things got by the server)

systemctl start openvpn@server

*************************************************************

Different VPC connectivity problems.

Solution:

01)VPC-Peearing connections->create VPC Peering Connection(just like router in middle of both vpc's) ->select both VPC's->Then create routing table with required destination and target is created pcx (just like static route)

******create 2 iptables, for both vpc id's. then add target subnet with related target pcx******

02)click destination server's inbound rules by clicking target server and security group rules

============================

Correct Theory - See Below

============================

.

.

*******

SSL Crt for ELB

01)paste crt block and private block given by provider.

02)paste chain block by referring https://knowledge.symantec.com/support/device-certificate-services/index?page=content&id=SO26895&actp=RSS&viewlocale=en_US#links

Name Servers - Route 53

I have domain - product.lk in different vendor.

i need all control (adding A records, etc ) to give my aws account.

then,

01)create Hosted Zone with "product.lk" then aws will show name servers (4) for that hosted zone.

02)copy that aws name server 1 by 1, paste that original dns provider's(ex: go daddy) site as name servers as follows.

03)now you have given the control to aws route 53. you can add A rocords, etc within aws route 53 hosted zone panel as follows.

==============================

correct way to create infrastructure

==============================

VPC 172.17.0.0/16

SUBNET PVT 172.17.1.0/24

SUBNET PUBLIC 172.17.2.0/24

ROUTE1 (Associate PUBLIC) + Add IGW for 0.0.0.0/0 traffic

ROUTE2 (Associate PVT SUBNET) + ADD NAT(will need ElasticIP) GW for 0.0.0.0/0 traffic

USE SEC GROUP ALLOW 22 and necessary ports >> use this for all instances

VPN SERVER ON PUBLIC SUBNET (will need elastic ip)

SERVER1 on PUBLIC SUBNET

SERVER2 on PVT SUBNET

-----------------

above all can connect when vpn server is up as bellow

------------------

yum install update -y

yum install epel* -y

cd /tmp/

yum install wget -y

wget https://github.com/pritunl/pritunl/releases/download/1.0.462.77/pritunl-1.0.462.77-1.el7.centos.x86_64.rpm

https://github.com/pritunl/pritunl/releases/download/1.28.1487.93/pritunl-1.28.1487.93-1.el7.centos.x86_64.rpm

yum install pritunl-1.0.462.77-1.el7.centos.x86_64.rpm -y

vi /etc/yum.repos.d/mongo.repo

[mongodb]

name=MongoDB

baseurl=http://downloads-distro.mongodb.org/repo/redhat/os/x86_64/

gpgcheck=0

enabled=1

yum install mongodb-org

systemctl start pritunl

systemctl start mongod

systemctl enable pritunl

systemctl enable mongod

https://34.203.125.132:9700

save below one,

mongodb://localhost:27017/pritunl

login, pritunl pritunl

change uname and pw

users, add org (myOrg)

servers->ad server->give a name , others keep defaults

attach organization to the server

start the server

download user key and add to the client (udp port 13342 (this port shows when creating a server above) must allowed to server instance )

yuo may face docker network interface conflicts then change docker interface ip in ur local pc (stop docker and --> dockerd --bip=172.101.0.211/16)

now connect using the downloaded profile and open vpn client /pritunl client.

you can connect now private ip of the vpn server

you can ping other server's private ip's also (remember to allow icmp from vpn provided ip's or all allow always)

----------------------

site to site

01)install pritunl server on vpc

add route for vpc network address only. ex: 10.41.0.0 (virtual network also there ex:192.168.241.0)

02)download user profile

03)add that to internal server(x.x.x.195)

i)cd /etc/openvpn/

vim prof.conf

systemctl restart openvpn@prof

you will see 2 tun's are added to route table (route -n)

10.41.0.0 192.168.241.1 255.255.0.0 UG 0 0 0 tun4

192.168.241.0 0.0.0.0 255.255.255.0 U 0 0 0 tun4

ii) add follow entry to iptables

cat /etc/sysconfig/iptables

*filter

:INPUT ACCEPT [0:0]

:FORWARD ACCEPT [0:0]

:OUTPUT ACCEPT [0:0]

COMMIT

*nat

:PREROUTING ACCEPT [0:0]

:POSTROUTING ACCEPT [0:0]

:INPUT ACCEPT [0:0]

:OUTPUT ACCEPT [0:0]

-A POSTROUTING -o tun0 -j MASQUERADE

-A POSTROUTING -o tun1 -j MASQUERADE

-A POSTROUTING -o tun2 -j MASQUERADE

-A POSTROUTING -o tun4 -j MASQUERADE

COMMIT

iii)add static route from internal firewall that 10.41.0.0/16 route through x.x.x.195

DONE site to site

--------------------

create 2nd VPC2 (172.18.0.0/16)

create SUBNET1_VPC2 (172.18.1.0/24)

CREATE IGW2 and ADD ROUTE1_VPC2 (0.0.0.0/0 --> IGW2) and associate subnet1_VPC2

crate instance server1_VPC2 with new sec group (allow 22 and icmp to test)

*********PEERING************

create VPC PEER Connection between VPC1 and VPC2 ,accept it

GO ROUTE1_VPC1 > 172.18.0.0/16 > PEER pcx

GO ROUTE1_VPC1 > 172.18.0.0/16 > PEER pcx

GO ROUTE2_VPC1 > 172.17.0.0/16 > PEER pcx

Now you can even ping 172.18.1. instance when you connected to vpn from ur pc

**********************

IAM

**********************

create new group ->name= FullAccess -> search and tick AmazonEC2FullAccess

create new group ->name= All_ReadOnly --> search and tick ReadOnlyAccess

new user-->name=ansible

new user-->name=terraform

tick Programmatic access, if need dashbord acces tick AWS Management Console access also

custom password

untick Require reset pw

select group FullAccess

create user

copy and paste in safe place Access key, secret key and pw

close

new user-->name=ansible

tick Programmatic access, if need dashbord acces tick AWS Management Console access also

custom password

untick Require reset pw

select group All_ReadOnly

create user

copy and paste in safe place Access key, secret key and pw

close

in a centos server

vim ec2.ini

regions = us-east-1 (what ever you use)

aws_access_key_id = xxxxxxxxxxxxxxxx

aws_secret_access_key = xxxxxxxxxxxx

===============================================

sample files

===============================================

vim ec2.ini

# Ansible EC2 external inventory script settings

#

[ec2]

# to talk to a private eucalyptus instance uncomment these lines

# and edit edit eucalyptus_host to be the host name of your cloud controller

#eucalyptus = True

#eucalyptus_host = clc.cloud.domain.org

# AWS regions to make calls to. Set this to 'all' to make request to all regions

# in AWS and merge the results together. Alternatively, set this to a comma

# separated list of regions. E.g. 'us-east-1,us-west-1,us-west-2'

regions = us-east-1

regions_exclude = us-gov-west-1,cn-north-1

# When generating inventory, Ansible needs to know how to address a server.

# Each EC2 instance has a lot of variables associated with it. Here is the list:

# http://docs.pythonboto.org/en/latest/ref/ec2.html#module-boto.ec2.instance

# Below are 2 variables that are used as the address of a server:

# - destination_variable

# - vpc_destination_variable

# This is the normal destination variable to use. If you are running Ansible

# from outside EC2, then 'public_dns_name' makes the most sense. If you are

# running Ansible from within EC2, then perhaps you want to use the internal

# address, and should set this to 'private_dns_name'. The key of an EC2 tag

# may optionally be used; however the boto instance variables hold precedence

# in the event of a collision.

destination_variable = private_ip_address

# This allows you to override the inventory_name with an ec2 variable, instead

# of using the destination_variable above. Addressing (aka ansible_ssh_host)

# will still use destination_variable. Tags should be written as 'tag_TAGNAME'.

#hostname_variable = tag_Name

# For server inside a VPC, using DNS names may not make sense. When an instance

# has 'subnet_id' set, this variable is used. If the subnet is public, setting

# this to 'ip_address' will return the public IP address. For instances in a

# private subnet, this should be set to 'private_ip_address', and Ansible must

# be run from within EC2. The key of an EC2 tag may optionally be used; however

# the boto instance variables hold precedence in the event of a collision.

# WARNING: - instances that are in the private vpc, _without_ public ip address

# will not be listed in the inventory until You set:

# vpc_destination_variable = private_ip_address

vpc_destination_variable = private_ip_address

# The following two settings allow flexible ansible host naming based on a

# python format string and a comma-separated list of ec2 tags. Note that:

#

# 1) If the tags referenced are not present for some instances, empty strings

# will be substituted in the format string.

# 2) This overrides both destination_variable and vpc_destination_variable.

#

#destination_format = {0}.{1}.example.com

#destination_format_tags = Name,environment

# To tag instances on EC2 with the resource records that point to them from

# Route53, uncomment and set 'route53' to True.

route53 = False

# To exclude RDS instances from the inventory, uncomment and set to False.

#rds = False

# To exclude ElastiCache instances from the inventory, uncomment and set to False.

#elasticache = False

# Additionally, you can specify the list of zones to exclude looking up in

# 'route53_excluded_zones' as a comma-separated list.

# route53_excluded_zones = samplezone1.com, samplezone2.com

# By default, only EC2 instances in the 'running' state are returned. Set

# 'all_instances' to True to return all instances regardless of state.

all_instances = False

# By default, only EC2 instances in the 'running' state are returned. Specify

# EC2 instance states to return as a comma-separated list. This

# option is overriden when 'all_instances' is True.

# instance_states = pending, running, shutting-down, terminated, stopping, stopped

# By default, only RDS instances in the 'available' state are returned. Set

# 'all_rds_instances' to True return all RDS instances regardless of state.

all_rds_instances = False

# By default, only ElastiCache clusters and nodes in the 'available' state

# are returned. Set 'all_elasticache_clusters' and/or 'all_elastic_nodes'

# to True return all ElastiCache clusters and nodes, regardless of state.

#

# Note that all_elasticache_nodes only applies to listed clusters. That means

# if you set all_elastic_clusters to false, no node will be return from

# unavailable clusters, regardless of the state and to what you set for

# all_elasticache_nodes.

all_elasticache_replication_groups = False

all_elasticache_clusters = False

all_elasticache_nodes = False

# API calls to EC2 are slow. For this reason, we cache the results of an API

# call. Set this to the path you want cache files to be written to. Two files

# will be written to this directory:

# - ansible-ec2.cache

# - ansible-ec2.index

cache_path = ~/.ansible/tmp

# The number of seconds a cache file is considered valid. After this many

# seconds, a new API call will be made, and the cache file will be updated.

# To disable the cache, set this value to 0

cache_max_age = 0

# Organize groups into a nested/hierarchy instead of a flat namespace.

nested_groups = False

# Replace - tags when creating groups to avoid issues with ansible

replace_dash_in_groups = True

# If set to true, any tag of the form "a,b,c" is expanded into a list

# and the results are used to create additional tag_* inventory groups.

expand_csv_tags = False

# The EC2 inventory output can become very large. To manage its size,

# configure which groups should be created.

group_by_instance_id = True

group_by_region = True

group_by_availability_zone = True

group_by_ami_id = True

group_by_instance_type = True

group_by_key_pair = True

group_by_vpc_id = True

group_by_security_group = True

group_by_tag_keys = True

group_by_tag_none = True

group_by_route53_names = True

group_by_rds_engine = True

group_by_rds_parameter_group = True

group_by_elasticache_engine = True

group_by_elasticache_cluster = True

group_by_elasticache_parameter_group = True

group_by_elasticache_replication_group = True

# If you only want to include hosts that match a certain regular expression

# pattern_include = staging-*

# If you want to exclude any hosts that match a certain regular expression

# pattern_exclude = staging-*

# Instance filters can be used to control which instances are retrieved for

# inventory. For the full list of possible filters, please read the EC2 API

# docs: http://docs.aws.amazon.com/AWSEC2/latest/APIReference/ApiReference-query-DescribeInstances.html#query-DescribeInstances-filters

# Filters are key/value pairs separated by '=', to list multiple filters use

# a list separated by commas. See examples below.

# Retrieve only instances with (key=value) env=staging tag

# instance_filters = tag:env=staging

# Retrieve only instances with role=webservers OR role=dbservers tag

# instance_filters = tag:role=webservers,tag:role=dbservers

# Retrieve only t1.micro instances OR instances with tag env=staging

# instance_filters = instance-type=t1.micro,tag:env=staging

# You can use wildcards in filter values also. Below will list instances which

# tag Name value matches webservers1*

# (ex. webservers15, webservers1a, webservers123 etc)

# instance_filters = tag:Name=webservers1*

# A boto configuration profile may be used to separate out credentials

# see http://boto.readthedocs.org/en/latest/boto_config_tut.html

# boto_profile = some-boto-profile-name

[credentials]

# The AWS credentials can optionally be specified here. Credentials specified

# here are ignored if the environment variable AWS_ACCESS_KEY_ID or

# AWS_PROFILE is set, or if the boto_profile property above is set.

#

# Supplying AWS credentials here is not recommended, as it introduces

# non-trivial security concerns. When going down this route, please make sure

# to set access permissions for this file correctly, e.g. handle it the same

# way as you would a private SSH key.

#

# Unlike the boto and AWS configure files, this section does not support

# profiles.

#

aws_access_key_id = xxxxxxxxxxx

aws_secret_access_key = xxxxxxxxxxxxx

# aws_security_token = XXXXXXXXXXXXXXXXXXXXXXXXXXXX

======================================================================

ec2.py

======================================

#!/usr/bin/env python

'''

EC2 external inventory script

=================================

Generates inventory that Ansible can understand by making API request to

AWS EC2 using the Boto library.

NOTE: This script assumes Ansible is being executed where the environment

variables needed for Boto have already been set:

export AWS_ACCESS_KEY_ID='AK123'

export AWS_SECRET_ACCESS_KEY='abc123'

This script also assumes there is an ec2.ini file alongside it. To specify a

different path to ec2.ini, define the EC2_INI_PATH environment variable:

export EC2_INI_PATH=/path/to/my_ec2.ini

If you're using eucalyptus you need to set the above variables and

you need to define:

export EC2_URL=http://hostname_of_your_cc:port/services/Eucalyptus

If you're using boto profiles (requires boto>=2.24.0) you can choose a profile

using the --boto-profile command line argument (e.g. ec2.py --boto-profile prod) or using

the AWS_PROFILE variable:

AWS_PROFILE=prod ansible-playbook -i ec2.py myplaybook.yml

For more details, see: http://docs.pythonboto.org/en/latest/boto_config_tut.html

When run against a specific host, this script returns the following variables:

- ec2_ami_launch_index

- ec2_architecture

- ec2_association

- ec2_attachTime

- ec2_attachment

- ec2_attachmentId

- ec2_client_token

- ec2_deleteOnTermination

- ec2_description

- ec2_deviceIndex

- ec2_dns_name

- ec2_eventsSet

- ec2_group_name

- ec2_hypervisor

- ec2_id

- ec2_image_id

- ec2_instanceState

- ec2_instance_type

- ec2_ipOwnerId

- ec2_ip_address

- ec2_item

- ec2_kernel

- ec2_key_name

- ec2_launch_time

- ec2_monitored

- ec2_monitoring

- ec2_networkInterfaceId

- ec2_ownerId

- ec2_persistent

- ec2_placement

- ec2_platform

- ec2_previous_state

- ec2_private_dns_name

- ec2_private_ip_address

- ec2_publicIp

- ec2_public_dns_name

- ec2_ramdisk

- ec2_reason

- ec2_region

- ec2_requester_id

- ec2_root_device_name

- ec2_root_device_type

- ec2_security_group_ids

- ec2_security_group_names

- ec2_shutdown_state

- ec2_sourceDestCheck

- ec2_spot_instance_request_id

- ec2_state

- ec2_state_code

- ec2_state_reason

- ec2_status

- ec2_subnet_id

- ec2_tenancy

- ec2_virtualization_type

- ec2_vpc_id

These variables are pulled out of a boto.ec2.instance object. There is a lack of

consistency with variable spellings (camelCase and underscores) since this

just loops through all variables the object exposes. It is preferred to use the

ones with underscores when multiple exist.

In addition, if an instance has AWS Tags associated with it, each tag is a new

variable named:

- ec2_tag_[Key] = [Value]

Security groups are comma-separated in 'ec2_security_group_ids' and

'ec2_security_group_names'.

'''

# (c) 2012, Peter Sankauskas

#

# This file is part of Ansible,

#

# Ansible is free software: you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation, either version 3 of the License, or

# (at your option) any later version.

#

# Ansible is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with Ansible. If not, see <http://www.gnu.org/licenses/>.

######################################################################

import sys

import os

import argparse

import re

from time import time

import boto

from boto import ec2

from boto import rds

from boto import elasticache

from boto import route53

import six

from six.moves import configparser

from collections import defaultdict

try:

import json

except ImportError:

import simplejson as json

class Ec2Inventory(object):

def _empty_inventory(self):

return {"_meta" : {"hostvars" : {}}}

def __init__(self):

''' Main execution path '''

# Inventory grouped by instance IDs, tags, security groups, regions,

# and availability zones

self.inventory = self._empty_inventory()

# Index of hostname (address) to instance ID

self.index = {}

# Boto profile to use (if any)

self.boto_profile = None

# AWS credentials.

self.credentials = {}

# Read settings and parse CLI arguments

self.parse_cli_args()

self.read_settings()

# Make sure that profile_name is not passed at all if not set

# as pre 2.24 boto will fall over otherwise

if self.boto_profile:

if not hasattr(boto.ec2.EC2Connection, 'profile_name'):

self.fail_with_error("boto version must be >= 2.24 to use profile")

# Cache

if self.args.refresh_cache:

self.do_api_calls_update_cache()

elif not self.is_cache_valid():

self.do_api_calls_update_cache()

# Data to print

if self.args.host:

data_to_print = self.get_host_info()

elif self.args.list:

# Display list of instances for inventory

if self.inventory == self._empty_inventory():

data_to_print = self.get_inventory_from_cache()

else:

data_to_print = self.json_format_dict(self.inventory, True)

print(data_to_print)

def is_cache_valid(self):

''' Determines if the cache files have expired, or if it is still valid '''

if os.path.isfile(self.cache_path_cache):

mod_time = os.path.getmtime(self.cache_path_cache)

current_time = time()

if (mod_time + self.cache_max_age) > current_time:

if os.path.isfile(self.cache_path_index):

return True

return False

def read_settings(self):

''' Reads the settings from the ec2.ini file '''

if six.PY3:

config = configparser.ConfigParser()

else:

config = configparser.SafeConfigParser()

ec2_default_ini_path = os.path.join(os.path.dirname(os.path.realpath(__file__)), 'ec2.ini')

ec2_ini_path = os.path.expanduser(os.path.expandvars(os.environ.get('EC2_INI_PATH', ec2_default_ini_path)))

config.read(ec2_ini_path)

# is eucalyptus?

self.eucalyptus_host = None

self.eucalyptus = False

if config.has_option('ec2', 'eucalyptus'):

self.eucalyptus = config.getboolean('ec2', 'eucalyptus')

if self.eucalyptus and config.has_option('ec2', 'eucalyptus_host'):

self.eucalyptus_host = config.get('ec2', 'eucalyptus_host')

# Regions

self.regions = []

configRegions = config.get('ec2', 'regions')

configRegions_exclude = config.get('ec2', 'regions_exclude')

if (configRegions == 'all'):

if self.eucalyptus_host:

self.regions.append(boto.connect_euca(host=self.eucalyptus_host).region.name, **self.credentials)

else:

for regionInfo in ec2.regions():

if regionInfo.name not in configRegions_exclude:

self.regions.append(regionInfo.name)

else:

self.regions = configRegions.split(",")

# Destination addresses

self.destination_variable = config.get('ec2', 'destination_variable')

self.vpc_destination_variable = config.get('ec2', 'vpc_destination_variable')

if config.has_option('ec2', 'hostname_variable'):

self.hostname_variable = config.get('ec2', 'hostname_variable')

else:

self.hostname_variable = None

if config.has_option('ec2', 'destination_format') and \

config.has_option('ec2', 'destination_format_tags'):

self.destination_format = config.get('ec2', 'destination_format')

self.destination_format_tags = config.get('ec2', 'destination_format_tags').split(',')

else:

self.destination_format = None

self.destination_format_tags = None

# Route53

self.route53_enabled = config.getboolean('ec2', 'route53')

self.route53_excluded_zones = []

if config.has_option('ec2', 'route53_excluded_zones'):

self.route53_excluded_zones.extend(

config.get('ec2', 'route53_excluded_zones', '').split(','))

# Include RDS instances?

self.rds_enabled = True

if config.has_option('ec2', 'rds'):

self.rds_enabled = config.getboolean('ec2', 'rds')

# Include ElastiCache instances?

self.elasticache_enabled = True

if config.has_option('ec2', 'elasticache'):

self.elasticache_enabled = config.getboolean('ec2', 'elasticache')

# Return all EC2 instances?

if config.has_option('ec2', 'all_instances'):

self.all_instances = config.getboolean('ec2', 'all_instances')

else:

self.all_instances = False

# Instance states to be gathered in inventory. Default is 'running'.

# Setting 'all_instances' to 'yes' overrides this option.

ec2_valid_instance_states = [

'pending',

'running',

'shutting-down',

'terminated',

'stopping',

'stopped'

]

self.ec2_instance_states = []

if self.all_instances:

self.ec2_instance_states = ec2_valid_instance_states

elif config.has_option('ec2', 'instance_states'):

for instance_state in config.get('ec2', 'instance_states').split(','):

instance_state = instance_state.strip()

if instance_state not in ec2_valid_instance_states:

continue

self.ec2_instance_states.append(instance_state)

else:

self.ec2_instance_states = ['running']

# Return all RDS instances? (if RDS is enabled)

if config.has_option('ec2', 'all_rds_instances') and self.rds_enabled:

self.all_rds_instances = config.getboolean('ec2', 'all_rds_instances')

else:

self.all_rds_instances = False

# Return all ElastiCache replication groups? (if ElastiCache is enabled)

if config.has_option('ec2', 'all_elasticache_replication_groups') and self.elasticache_enabled:

self.all_elasticache_replication_groups = config.getboolean('ec2', 'all_elasticache_replication_groups')

else:

self.all_elasticache_replication_groups = False

# Return all ElastiCache clusters? (if ElastiCache is enabled)

if config.has_option('ec2', 'all_elasticache_clusters') and self.elasticache_enabled:

self.all_elasticache_clusters = config.getboolean('ec2', 'all_elasticache_clusters')

else:

self.all_elasticache_clusters = False

# Return all ElastiCache nodes? (if ElastiCache is enabled)

if config.has_option('ec2', 'all_elasticache_nodes') and self.elasticache_enabled:

self.all_elasticache_nodes = config.getboolean('ec2', 'all_elasticache_nodes')

else:

self.all_elasticache_nodes = False

# boto configuration profile (prefer CLI argument)

self.boto_profile = self.args.boto_profile

if config.has_option('ec2', 'boto_profile') and not self.boto_profile:

self.boto_profile = config.get('ec2', 'boto_profile')

# AWS credentials (prefer environment variables)

if not (self.boto_profile or os.environ.get('AWS_ACCESS_KEY_ID') or

os.environ.get('AWS_PROFILE')):

if config.has_option('credentials', 'aws_access_key_id'):

aws_access_key_id = config.get('credentials', 'aws_access_key_id')

else:

aws_access_key_id = None

if config.has_option('credentials', 'aws_secret_access_key'):

aws_secret_access_key = config.get('credentials', 'aws_secret_access_key')

else:

aws_secret_access_key = None

if config.has_option('credentials', 'aws_security_token'):

aws_security_token = config.get('credentials', 'aws_security_token')

else:

aws_security_token = None

if aws_access_key_id:

self.credentials = {

'aws_access_key_id': aws_access_key_id,

'aws_secret_access_key': aws_secret_access_key

}

if aws_security_token:

self.credentials['security_token'] = aws_security_token

# Cache related

cache_dir = os.path.expanduser(config.get('ec2', 'cache_path'))

if self.boto_profile:

cache_dir = os.path.join(cache_dir, 'profile_' + self.boto_profile)

if not os.path.exists(cache_dir):

os.makedirs(cache_dir)

cache_name = 'ansible-ec2'

aws_profile = lambda: (self.boto_profile or

os.environ.get('AWS_PROFILE') or

os.environ.get('AWS_ACCESS_KEY_ID') or

self.credentials.get('aws_access_key_id', None))

if aws_profile():

cache_name = '%s-%s' % (cache_name, aws_profile())

self.cache_path_cache = cache_dir + "/%s.cache" % cache_name

self.cache_path_index = cache_dir + "/%s.index" % cache_name

self.cache_max_age = config.getint('ec2', 'cache_max_age')

if config.has_option('ec2', 'expand_csv_tags'):

self.expand_csv_tags = config.getboolean('ec2', 'expand_csv_tags')

else:

self.expand_csv_tags = False

# Configure nested groups instead of flat namespace.

if config.has_option('ec2', 'nested_groups'):

self.nested_groups = config.getboolean('ec2', 'nested_groups')

else:

self.nested_groups = False

# Replace dash or not in group names

if config.has_option('ec2', 'replace_dash_in_groups'):

self.replace_dash_in_groups = config.getboolean('ec2', 'replace_dash_in_groups')

else:

self.replace_dash_in_groups = True

# Configure which groups should be created.

group_by_options = [

'group_by_instance_id',

'group_by_region',

'group_by_availability_zone',

'group_by_ami_id',

'group_by_instance_type',

'group_by_key_pair',

'group_by_vpc_id',

'group_by_security_group',

'group_by_tag_keys',

'group_by_tag_none',

'group_by_route53_names',

'group_by_rds_engine',

'group_by_rds_parameter_group',

'group_by_elasticache_engine',

'group_by_elasticache_cluster',

'group_by_elasticache_parameter_group',

'group_by_elasticache_replication_group',

]

for option in group_by_options:

if config.has_option('ec2', option):

setattr(self, option, config.getboolean('ec2', option))

else:

setattr(self, option, True)

# Do we need to just include hosts that match a pattern?

try:

pattern_include = config.get('ec2', 'pattern_include')

if pattern_include and len(pattern_include) > 0:

self.pattern_include = re.compile(pattern_include)

else:

self.pattern_include = None

except configparser.NoOptionError:

self.pattern_include = None

# Do we need to exclude hosts that match a pattern?

try:

pattern_exclude = config.get('ec2', 'pattern_exclude');

if pattern_exclude and len(pattern_exclude) > 0:

self.pattern_exclude = re.compile(pattern_exclude)

else:

self.pattern_exclude = None

except configparser.NoOptionError:

self.pattern_exclude = None

# Instance filters (see boto and EC2 API docs). Ignore invalid filters.

self.ec2_instance_filters = defaultdict(list)

if config.has_option('ec2', 'instance_filters'):

filters = [f for f in config.get('ec2', 'instance_filters').split(',') if f]

for instance_filter in filters:

instance_filter = instance_filter.strip()

if not instance_filter or '=' not in instance_filter:

continue

filter_key, filter_value = [x.strip() for x in instance_filter.split('=', 1)]

if not filter_key:

continue

self.ec2_instance_filters[filter_key].append(filter_value)

def parse_cli_args(self):

''' Command line argument processing '''

parser = argparse.ArgumentParser(description='Produce an Ansible Inventory file based on EC2')

parser.add_argument('--list', action='store_true', default=True,

help='List instances (default: True)')

parser.add_argument('--host', action='store',

help='Get all the variables about a specific instance')

parser.add_argument('--refresh-cache', action='store_true', default=False,

help='Force refresh of cache by making API requests to EC2 (default: False - use cache files)')

parser.add_argument('--profile', '--boto-profile', action='store', dest='boto_profile',

help='Use boto profile for connections to EC2')

self.args = parser.parse_args()

def do_api_calls_update_cache(self):

''' Do API calls to each region, and save data in cache files '''

if self.route53_enabled:

self.get_route53_records()

for region in self.regions:

self.get_instances_by_region(region)

if self.rds_enabled:

self.get_rds_instances_by_region(region)

if self.elasticache_enabled:

self.get_elasticache_clusters_by_region(region)

self.get_elasticache_replication_groups_by_region(region)

self.write_to_cache(self.inventory, self.cache_path_cache)

self.write_to_cache(self.index, self.cache_path_index)

def connect(self, region):

''' create connection to api server'''

if self.eucalyptus:

conn = boto.connect_euca(host=self.eucalyptus_host, **self.credentials)

conn.APIVersion = '2010-08-31'

else:

conn = self.connect_to_aws(ec2, region)

return conn

def boto_fix_security_token_in_profile(self, connect_args):

''' monkey patch for boto issue boto/boto#2100 '''

profile = 'profile ' + self.boto_profile

if boto.config.has_option(profile, 'aws_security_token'):

connect_args['security_token'] = boto.config.get(profile, 'aws_security_token')

return connect_args

def connect_to_aws(self, module, region):

connect_args = self.credentials

# only pass the profile name if it's set (as it is not supported by older boto versions)

if self.boto_profile:

connect_args['profile_name'] = self.boto_profile

self.boto_fix_security_token_in_profile(connect_args)

conn = module.connect_to_region(region, **connect_args)

# connect_to_region will fail "silently" by returning None if the region name is wrong or not supported

if conn is None:

self.fail_with_error("region name: %s likely not supported, or AWS is down. connection to region failed." % region)

return conn

def get_instances_by_region(self, region):

''' Makes an AWS EC2 API call to the list of instances in a particular

region '''

try:

conn = self.connect(region)

reservations = []

if self.ec2_instance_filters:

for filter_key, filter_values in self.ec2_instance_filters.items():

reservations.extend(conn.get_all_instances(filters = { filter_key : filter_values }))

else:

reservations = conn.get_all_instances()

# Pull the tags back in a second step

# AWS are on record as saying that the tags fetched in the first `get_all_instances` request are not

# reliable and may be missing, and the only way to guarantee they are there is by calling `get_all_tags`

instance_ids = []

for reservation in reservations:

instance_ids.extend([instance.id for instance in reservation.instances])

max_filter_value = 199

tags = []

for i in range(0, len(instance_ids), max_filter_value):

tags.extend(conn.get_all_tags(filters={'resource-type': 'instance', 'resource-id': instance_ids[i:i+max_filter_value]}))

tags_by_instance_id = defaultdict(dict)

for tag in tags:

tags_by_instance_id[tag.res_id][tag.name] = tag.value

for reservation in reservations:

for instance in reservation.instances:

instance.tags = tags_by_instance_id[instance.id]

self.add_instance(instance, region)

except boto.exception.BotoServerError as e:

if e.error_code == 'AuthFailure':

error = self.get_auth_error_message()

else:

backend = 'Eucalyptus' if self.eucalyptus else 'AWS'

error = "Error connecting to %s backend.\n%s" % (backend, e.message)

self.fail_with_error(error, 'getting EC2 instances')

def get_rds_instances_by_region(self, region):

''' Makes an AWS API call to the list of RDS instances in a particular

region '''

try:

conn = self.connect_to_aws(rds, region)

if conn:

marker = None

while True:

instances = conn.get_all_dbinstances(marker=marker)

marker = instances.marker

for instance in instances:

self.add_rds_instance(instance, region)

if not marker:

break

except boto.exception.BotoServerError as e:

error = e.reason

if e.error_code == 'AuthFailure':

error = self.get_auth_error_message()

if not e.reason == "Forbidden":

error = "Looks like AWS RDS is down:\n%s" % e.message

self.fail_with_error(error, 'getting RDS instances')

def get_elasticache_clusters_by_region(self, region):

''' Makes an AWS API call to the list of ElastiCache clusters (with

nodes' info) in a particular region.'''

# ElastiCache boto module doesn't provide a get_all_intances method,

# that's why we need to call describe directly (it would be called by

# the shorthand method anyway...)

try:

conn = self.connect_to_aws(elasticache, region)

if conn:

# show_cache_node_info = True

# because we also want nodes' information

response = conn.describe_cache_clusters(None, None, None, True)

except boto.exception.BotoServerError as e:

error = e.reason

if e.error_code == 'AuthFailure':

error = self.get_auth_error_message()

if not e.reason == "Forbidden":

error = "Looks like AWS ElastiCache is down:\n%s" % e.message

self.fail_with_error(error, 'getting ElastiCache clusters')

try:

# Boto also doesn't provide wrapper classes to CacheClusters or

# CacheNodes. Because of that wo can't make use of the get_list

# method in the AWSQueryConnection. Let's do the work manually

clusters = response['DescribeCacheClustersResponse']['DescribeCacheClustersResult']['CacheClusters']

except KeyError as e:

error = "ElastiCache query to AWS failed (unexpected format)."

self.fail_with_error(error, 'getting ElastiCache clusters')

for cluster in clusters:

self.add_elasticache_cluster(cluster, region)

def get_elasticache_replication_groups_by_region(self, region):

''' Makes an AWS API call to the list of ElastiCache replication groups

in a particular region.'''

# ElastiCache boto module doesn't provide a get_all_intances method,

# that's why we need to call describe directly (it would be called by

# the shorthand method anyway...)

try:

conn = self.connect_to_aws(elasticache, region)

if conn:

response = conn.describe_replication_groups()

except boto.exception.BotoServerError as e:

error = e.reason

if e.error_code == 'AuthFailure':

error = self.get_auth_error_message()

if not e.reason == "Forbidden":

error = "Looks like AWS ElastiCache [Replication Groups] is down:\n%s" % e.message

self.fail_with_error(error, 'getting ElastiCache clusters')

try:

# Boto also doesn't provide wrapper classes to ReplicationGroups

# Because of that wo can't make use of the get_list method in the

# AWSQueryConnection. Let's do the work manually

replication_groups = response['DescribeReplicationGroupsResponse']['DescribeReplicationGroupsResult']['ReplicationGroups']

except KeyError as e:

error = "ElastiCache [Replication Groups] query to AWS failed (unexpected format)."

self.fail_with_error(error, 'getting ElastiCache clusters')

for replication_group in replication_groups:

self.add_elasticache_replication_group(replication_group, region)

def get_auth_error_message(self):

''' create an informative error message if there is an issue authenticating'''

errors = ["Authentication error retrieving ec2 inventory."]

if None in [os.environ.get('AWS_ACCESS_KEY_ID'), os.environ.get('AWS_SECRET_ACCESS_KEY')]:

errors.append(' - No AWS_ACCESS_KEY_ID or AWS_SECRET_ACCESS_KEY environment vars found')

else:

errors.append(' - AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY environment vars found but may not be correct')

boto_paths = ['/etc/boto.cfg', '~/.boto', '~/.aws/credentials']

boto_config_found = list(p for p in boto_paths if os.path.isfile(os.path.expanduser(p)))

if len(boto_config_found) > 0:

errors.append(" - Boto configs found at '%s', but the credentials contained may not be correct" % ', '.join(boto_config_found))

else:

errors.append(" - No Boto config found at any expected location '%s'" % ', '.join(boto_paths))

return '\n'.join(errors)

def fail_with_error(self, err_msg, err_operation=None):

'''log an error to std err for ansible-playbook to consume and exit'''

if err_operation:

err_msg = 'ERROR: "{err_msg}", while: {err_operation}'.format(

err_msg=err_msg, err_operation=err_operation)

sys.stderr.write(err_msg)

sys.exit(1)

def get_instance(self, region, instance_id):

conn = self.connect(region)

reservations = conn.get_all_instances([instance_id])

for reservation in reservations:

for instance in reservation.instances:

return instance

def add_instance(self, instance, region):

''' Adds an instance to the inventory and index, as long as it is

addressable '''

# Only return instances with desired instance states

if instance.state not in self.ec2_instance_states:

return

# Select the best destination address

if self.destination_format and self.destination_format_tags:

dest = self.destination_format.format(*[ getattr(instance, 'tags').get(tag, '') for tag in self.destination_format_tags ])

elif instance.subnet_id:

dest = getattr(instance, self.vpc_destination_variable, None)

if dest is None:

dest = getattr(instance, 'tags').get(self.vpc_destination_variable, None)

else:

dest = getattr(instance, self.destination_variable, None)

if dest is None:

dest = getattr(instance, 'tags').get(self.destination_variable, None)

if not dest:

# Skip instances we cannot address (e.g. private VPC subnet)

return

# Set the inventory name

hostname = None

if self.hostname_variable:

if self.hostname_variable.startswith('tag_'):

hostname = instance.tags.get(self.hostname_variable[4:], None)

else:

hostname = getattr(instance, self.hostname_variable)

# If we can't get a nice hostname, use the destination address

if not hostname:

hostname = dest

else:

hostname = self.to_safe(hostname).lower()

# if we only want to include hosts that match a pattern, skip those that don't

if self.pattern_include and not self.pattern_include.match(hostname):

return

# if we need to exclude hosts that match a pattern, skip those

if self.pattern_exclude and self.pattern_exclude.match(hostname):

return

# Add to index

self.index[hostname] = [region, instance.id]

# Inventory: Group by instance ID (always a group of 1)

if self.group_by_instance_id:

self.inventory[instance.id] = [hostname]

if self.nested_groups:

self.push_group(self.inventory, 'instances', instance.id)

# Inventory: Group by region

if self.group_by_region:

self.push(self.inventory, region, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'regions', region)

# Inventory: Group by availability zone

if self.group_by_availability_zone:

self.push(self.inventory, instance.placement, hostname)

if self.nested_groups:

if self.group_by_region:

self.push_group(self.inventory, region, instance.placement)

self.push_group(self.inventory, 'zones', instance.placement)

# Inventory: Group by Amazon Machine Image (AMI) ID

if self.group_by_ami_id:

ami_id = self.to_safe(instance.image_id)

self.push(self.inventory, ami_id, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'images', ami_id)

# Inventory: Group by instance type

if self.group_by_instance_type:

type_name = self.to_safe('type_' + instance.instance_type)

self.push(self.inventory, type_name, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'types', type_name)

# Inventory: Group by key pair

if self.group_by_key_pair and instance.key_name:

key_name = self.to_safe('key_' + instance.key_name)

self.push(self.inventory, key_name, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'keys', key_name)

# Inventory: Group by VPC

if self.group_by_vpc_id and instance.vpc_id:

vpc_id_name = self.to_safe('vpc_id_' + instance.vpc_id)

self.push(self.inventory, vpc_id_name, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'vpcs', vpc_id_name)

# Inventory: Group by security group

if self.group_by_security_group:

try:

for group in instance.groups:

key = self.to_safe("security_group_" + group.name)

self.push(self.inventory, key, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'security_groups', key)

except AttributeError:

self.fail_with_error('\n'.join(['Package boto seems a bit older.',

'Please upgrade boto >= 2.3.0.']))

# Inventory: Group by tag keys

if self.group_by_tag_keys:

for k, v in instance.tags.items():

if self.expand_csv_tags and v and ',' in v:

values = map(lambda x: x.strip(), v.split(','))

else:

values = [v]

for v in values:

if v:

key = self.to_safe("tag_" + k + "=" + v)

else:

key = self.to_safe("tag_" + k)

self.push(self.inventory, key, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'tags', self.to_safe("tag_" + k))

if v:

self.push_group(self.inventory, self.to_safe("tag_" + k), key)

# Inventory: Group by Route53 domain names if enabled

if self.route53_enabled and self.group_by_route53_names:

route53_names = self.get_instance_route53_names(instance)

for name in route53_names:

self.push(self.inventory, name, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'route53', name)

# Global Tag: instances without tags

if self.group_by_tag_none and len(instance.tags) == 0:

self.push(self.inventory, 'tag_none', hostname)

if self.nested_groups:

self.push_group(self.inventory, 'tags', 'tag_none')

# Global Tag: tag all EC2 instances

self.push(self.inventory, 'ec2', hostname)

self.inventory["_meta"]["hostvars"][hostname] = self.get_host_info_dict_from_instance(instance)

self.inventory["_meta"]["hostvars"][hostname]['ansible_ssh_host'] = dest

def add_rds_instance(self, instance, region):

''' Adds an RDS instance to the inventory and index, as long as it is

addressable '''

# Only want available instances unless all_rds_instances is True

if not self.all_rds_instances and instance.status != 'available':

return

# Select the best destination address

dest = instance.endpoint[0]

if not dest:

# Skip instances we cannot address (e.g. private VPC subnet)

return

# Set the inventory name

hostname = None

if self.hostname_variable:

if self.hostname_variable.startswith('tag_'):

hostname = instance.tags.get(self.hostname_variable[4:], None)

else:

hostname = getattr(instance, self.hostname_variable)

# If we can't get a nice hostname, use the destination address

if not hostname:

hostname = dest

hostname = self.to_safe(hostname).lower()

# Add to index

self.index[hostname] = [region, instance.id]

# Inventory: Group by instance ID (always a group of 1)

if self.group_by_instance_id:

self.inventory[instance.id] = [hostname]

if self.nested_groups:

self.push_group(self.inventory, 'instances', instance.id)

# Inventory: Group by region

if self.group_by_region:

self.push(self.inventory, region, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'regions', region)

# Inventory: Group by availability zone

if self.group_by_availability_zone:

self.push(self.inventory, instance.availability_zone, hostname)

if self.nested_groups:

if self.group_by_region:

self.push_group(self.inventory, region, instance.availability_zone)

self.push_group(self.inventory, 'zones', instance.availability_zone)

# Inventory: Group by instance type

if self.group_by_instance_type:

type_name = self.to_safe('type_' + instance.instance_class)

self.push(self.inventory, type_name, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'types', type_name)

# Inventory: Group by VPC

if self.group_by_vpc_id and instance.subnet_group and instance.subnet_group.vpc_id:

vpc_id_name = self.to_safe('vpc_id_' + instance.subnet_group.vpc_id)

self.push(self.inventory, vpc_id_name, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'vpcs', vpc_id_name)

# Inventory: Group by security group

if self.group_by_security_group:

try:

if instance.security_group:

key = self.to_safe("security_group_" + instance.security_group.name)

self.push(self.inventory, key, hostname)

if self.nested_groups:

self.push_group(self.inventory, 'security_groups', key)

except AttributeError:

self.fail_with_error('\n'.join(['Package boto seems a bit older.',

'Please upgrade boto >= 2.3.0.']))

# Inventory: Group by engine

if self.group_by_rds_engine:

self.push(self.inventory, self.to_safe("rds_" + instance.engine), hostname)

if self.nested_groups:

self.push_group(self.inventory, 'rds_engines', self.to_safe("rds_" + instance.engine))

# Inventory: Group by parameter group

if self.group_by_rds_parameter_group:

self.push(self.inventory, self.to_safe("rds_parameter_group_" + instance.parameter_group.name), hostname)

if self.nested_groups:

self.push_group(self.inventory, 'rds_parameter_groups', self.to_safe("rds_parameter_group_" + instance.parameter_group.name))

# Global Tag: all RDS instances

self.push(self.inventory, 'rds', hostname)

self.inventory["_meta"]["hostvars"][hostname] = self.get_host_info_dict_from_instance(instance)

self.inventory["_meta"]["hostvars"][hostname]['ansible_ssh_host'] = dest

def add_elasticache_cluster(self, cluster, region):

''' Adds an ElastiCache cluster to the inventory and index, as long as

it's nodes are addressable '''

# Only want available clusters unless all_elasticache_clusters is True

if not self.all_elasticache_clusters and cluster['CacheClusterStatus'] != 'available':

return

# Select the best destination address

if 'ConfigurationEndpoint' in cluster and cluster['ConfigurationEndpoint']:

# Memcached cluster

dest = cluster['ConfigurationEndpoint']['Address']

is_redis = False

else:

# Redis sigle node cluster

# Because all Redis clusters are single nodes, we'll merge the

# info from the cluster with info about the node

dest = cluster['CacheNodes'][0]['Endpoint']['Address']

is_redis = True

if not dest:

# Skip clusters we cannot address (e.g. private VPC subnet)

return

# Add to index

self.index[dest] = [region, cluster['CacheClusterId']]

# Inventory: Group by instance ID (always a group of 1)

if self.group_by_instance_id:

self.inventory[cluster['CacheClusterId']] = [dest]

if self.nested_groups:

self.push_group(self.inventory, 'instances', cluster['CacheClusterId'])

# Inventory: Group by region

if self.group_by_region and not is_redis:

self.push(self.inventory, region, dest)

if self.nested_groups:

self.push_group(self.inventory, 'regions', region)

# Inventory: Group by availability zone

if self.group_by_availability_zone and not is_redis:

self.push(self.inventory, cluster['PreferredAvailabilityZone'], dest)

if self.nested_groups:

if self.group_by_region:

self.push_group(self.inventory, region, cluster['PreferredAvailabilityZone'])

self.push_group(self.inventory, 'zones', cluster['PreferredAvailabilityZone'])

# Inventory: Group by node type

if self.group_by_instance_type and not is_redis:

type_name = self.to_safe('type_' + cluster['CacheNodeType'])

self.push(self.inventory, type_name, dest)

if self.nested_groups:

self.push_group(self.inventory, 'types', type_name)

# Inventory: Group by VPC (information not available in the current

# AWS API version for ElastiCache)

# Inventory: Group by security group

if self.group_by_security_group and not is_redis:

# Check for the existence of the 'SecurityGroups' key and also if

# this key has some value. When the cluster is not placed in a SG

# the query can return None here and cause an error.

if 'SecurityGroups' in cluster and cluster['SecurityGroups'] is not None:

for security_group in cluster['SecurityGroups']:

key = self.to_safe("security_group_" + security_group['SecurityGroupId'])

self.push(self.inventory, key, dest)

if self.nested_groups:

self.push_group(self.inventory, 'security_groups', key)

# Inventory: Group by engine

if self.group_by_elasticache_engine and not is_redis:

self.push(self.inventory, self.to_safe("elasticache_" + cluster['Engine']), dest)

if self.nested_groups:

self.push_group(self.inventory, 'elasticache_engines', self.to_safe(cluster['Engine']))

# Inventory: Group by parameter group

if self.group_by_elasticache_parameter_group:

self.push(self.inventory, self.to_safe("elasticache_parameter_group_" + cluster['CacheParameterGroup']['CacheParameterGroupName']), dest)

if self.nested_groups:

self.push_group(self.inventory, 'elasticache_parameter_groups', self.to_safe(cluster['CacheParameterGroup']['CacheParameterGroupName']))

# Inventory: Group by replication group

if self.group_by_elasticache_replication_group and 'ReplicationGroupId' in cluster and cluster['ReplicationGroupId']:

self.push(self.inventory, self.to_safe("elasticache_replication_group_" + cluster['ReplicationGroupId']), dest)

if self.nested_groups:

self.push_group(self.inventory, 'elasticache_replication_groups', self.to_safe(cluster['ReplicationGroupId']))

# Global Tag: all ElastiCache clusters

self.push(self.inventory, 'elasticache_clusters', cluster['CacheClusterId'])

host_info = self.get_host_info_dict_from_describe_dict(cluster)

self.inventory["_meta"]["hostvars"][dest] = host_info

# Add the nodes

for node in cluster['CacheNodes']:

self.add_elasticache_node(node, cluster, region)

def add_elasticache_node(self, node, cluster, region):

''' Adds an ElastiCache node to the inventory and index, as long as

it is addressable '''

# Only want available nodes unless all_elasticache_nodes is True

if not self.all_elasticache_nodes and node['CacheNodeStatus'] != 'available':

return

# Select the best destination address

dest = node['Endpoint']['Address']

if not dest:

# Skip nodes we cannot address (e.g. private VPC subnet)

return

node_id = self.to_safe(cluster['CacheClusterId'] + '_' + node['CacheNodeId'])

# Add to index

self.index[dest] = [region, node_id]

# Inventory: Group by node ID (always a group of 1)

if self.group_by_instance_id:

self.inventory[node_id] = [dest]

if self.nested_groups:

self.push_group(self.inventory, 'instances', node_id)

# Inventory: Group by region

if self.group_by_region:

self.push(self.inventory, region, dest)

if self.nested_groups:

self.push_group(self.inventory, 'regions', region)

# Inventory: Group by availability zone

if self.group_by_availability_zone:

self.push(self.inventory, cluster['PreferredAvailabilityZone'], dest)

if self.nested_groups:

if self.group_by_region:

self.push_group(self.inventory, region, cluster['PreferredAvailabilityZone'])

self.push_group(self.inventory, 'zones', cluster['PreferredAvailabilityZone'])

# Inventory: Group by node type

if self.group_by_instance_type:

type_name = self.to_safe('type_' + cluster['CacheNodeType'])

self.push(self.inventory, type_name, dest)

if self.nested_groups:

self.push_group(self.inventory, 'types', type_name)

# Inventory: Group by VPC (information not available in the current

# AWS API version for ElastiCache)

# Inventory: Group by security group

if self.group_by_security_group:

# Check for the existence of the 'SecurityGroups' key and also if

# this key has some value. When the cluster is not placed in a SG

# the query can return None here and cause an error.

if 'SecurityGroups' in cluster and cluster['SecurityGroups'] is not None:

for security_group in cluster['SecurityGroups']:

key = self.to_safe("security_group_" + security_group['SecurityGroupId'])

self.push(self.inventory, key, dest)

if self.nested_groups:

self.push_group(self.inventory, 'security_groups', key)

# Inventory: Group by engine

if self.group_by_elasticache_engine:

self.push(self.inventory, self.to_safe("elasticache_" + cluster['Engine']), dest)

if self.nested_groups:

self.push_group(self.inventory, 'elasticache_engines', self.to_safe("elasticache_" + cluster['Engine']))

# Inventory: Group by parameter group (done at cluster level)

# Inventory: Group by replication group (done at cluster level)

# Inventory: Group by ElastiCache Cluster

if self.group_by_elasticache_cluster:

self.push(self.inventory, self.to_safe("elasticache_cluster_" + cluster['CacheClusterId']), dest)

# Global Tag: all ElastiCache nodes

self.push(self.inventory, 'elasticache_nodes', dest)

host_info = self.get_host_info_dict_from_describe_dict(node)

if dest in self.inventory["_meta"]["hostvars"]:

self.inventory["_meta"]["hostvars"][dest].update(host_info)

else:

self.inventory["_meta"]["hostvars"][dest] = host_info

def add_elasticache_replication_group(self, replication_group, region):

''' Adds an ElastiCache replication group to the inventory and index '''

# Only want available clusters unless all_elasticache_replication_groups is True

if not self.all_elasticache_replication_groups and replication_group['Status'] != 'available':

return

# Select the best destination address (PrimaryEndpoint)

dest = replication_group['NodeGroups'][0]['PrimaryEndpoint']['Address']

if not dest:

# Skip clusters we cannot address (e.g. private VPC subnet)

return

# Add to index

self.index[dest] = [region, replication_group['ReplicationGroupId']]

# Inventory: Group by ID (always a group of 1)

if self.group_by_instance_id:

self.inventory[replication_group['ReplicationGroupId']] = [dest]

if self.nested_groups:

self.push_group(self.inventory, 'instances', replication_group['ReplicationGroupId'])

# Inventory: Group by region

if self.group_by_region:

self.push(self.inventory, region, dest)

if self.nested_groups:

self.push_group(self.inventory, 'regions', region)

# Inventory: Group by availability zone (doesn't apply to replication groups)

# Inventory: Group by node type (doesn't apply to replication groups)

# Inventory: Group by VPC (information not available in the current

# AWS API version for replication groups

# Inventory: Group by security group (doesn't apply to replication groups)

# Check this value in cluster level

# Inventory: Group by engine (replication groups are always Redis)

if self.group_by_elasticache_engine:

self.push(self.inventory, 'elasticache_redis', dest)

if self.nested_groups:

self.push_group(self.inventory, 'elasticache_engines', 'redis')

# Global Tag: all ElastiCache clusters

self.push(self.inventory, 'elasticache_replication_groups', replication_group['ReplicationGroupId'])

host_info = self.get_host_info_dict_from_describe_dict(replication_group)

self.inventory["_meta"]["hostvars"][dest] = host_info

def get_route53_records(self):

''' Get and store the map of resource records to domain names that

point to them. '''

r53_conn = route53.Route53Connection()

all_zones = r53_conn.get_zones()

route53_zones = [ zone for zone in all_zones if zone.name[:-1]

not in self.route53_excluded_zones ]

self.route53_records = {}

for zone in route53_zones:

rrsets = r53_conn.get_all_rrsets(zone.id)

for record_set in rrsets:

record_name = record_set.name

if record_name.endswith('.'):

record_name = record_name[:-1]

for resource in record_set.resource_records:

self.route53_records.setdefault(resource, set())

self.route53_records[resource].add(record_name)

def get_instance_route53_names(self, instance):

''' Check if an instance is referenced in the records we have from

Route53. If it is, return the list of domain names pointing to said

instance. If nothing points to it, return an empty list. '''

instance_attributes = [ 'public_dns_name', 'private_dns_name',

'ip_address', 'private_ip_address' ]

name_list = set()

for attrib in instance_attributes:

try:

value = getattr(instance, attrib)

except AttributeError:

continue

if value in self.route53_records:

name_list.update(self.route53_records[value])

return list(name_list)

def get_host_info_dict_from_instance(self, instance):

instance_vars = {}

for key in vars(instance):

value = getattr(instance, key)

key = self.to_safe('ec2_' + key)

# Handle complex types

# state/previous_state changed to properties in boto in https://github.com/boto/boto/commit/a23c379837f698212252720d2af8dec0325c9518

if key == 'ec2__state':

instance_vars['ec2_state'] = instance.state or ''

instance_vars['ec2_state_code'] = instance.state_code

elif key == 'ec2__previous_state':

instance_vars['ec2_previous_state'] = instance.previous_state or ''

instance_vars['ec2_previous_state_code'] = instance.previous_state_code

elif type(value) in [int, bool]:

instance_vars[key] = value

elif isinstance(value, six.string_types):

instance_vars[key] = value.strip()

elif type(value) == type(None):

instance_vars[key] = ''

elif key == 'ec2_region':

instance_vars[key] = value.name

elif key == 'ec2__placement':

instance_vars['ec2_placement'] = value.zone

elif key == 'ec2_tags':

for k, v in value.items():

if self.expand_csv_tags and ',' in v:

v = map(lambda x: x.strip(), v.split(','))

key = self.to_safe('ec2_tag_' + k)

instance_vars[key] = v

elif key == 'ec2_groups':

group_ids = []

group_names = []

for group in value:

group_ids.append(group.id)

group_names.append(group.name)

instance_vars["ec2_security_group_ids"] = ','.join([str(i) for i in group_ids])

instance_vars["ec2_security_group_names"] = ','.join([str(i) for i in group_names])

else:

pass

# TODO Product codes if someone finds them useful

#print key

#print type(value)

#print value

return instance_vars

def get_host_info_dict_from_describe_dict(self, describe_dict):

''' Parses the dictionary returned by the API call into a flat list

of parameters. This method should be used only when 'describe' is

used directly because Boto doesn't provide specific classes. '''

# I really don't agree with prefixing everything with 'ec2'

# because EC2, RDS and ElastiCache are different services.

# I'm just following the pattern used until now to not break any

# compatibility.

host_info = {}

for key in describe_dict:

value = describe_dict[key]

key = self.to_safe('ec2_' + self.uncammelize(key))

# Handle complex types

# Target: Memcached Cache Clusters

if key == 'ec2_configuration_endpoint' and value:

host_info['ec2_configuration_endpoint_address'] = value['Address']

host_info['ec2_configuration_endpoint_port'] = value['Port']

# Target: Cache Nodes and Redis Cache Clusters (single node)

if key == 'ec2_endpoint' and value:

host_info['ec2_endpoint_address'] = value['Address']

host_info['ec2_endpoint_port'] = value['Port']

# Target: Redis Replication Groups

if key == 'ec2_node_groups' and value:

host_info['ec2_endpoint_address'] = value[0]['PrimaryEndpoint']['Address']

host_info['ec2_endpoint_port'] = value[0]['PrimaryEndpoint']['Port']

replica_count = 0

for node in value[0]['NodeGroupMembers']:

if node['CurrentRole'] == 'primary':

host_info['ec2_primary_cluster_address'] = node['ReadEndpoint']['Address']

host_info['ec2_primary_cluster_port'] = node['ReadEndpoint']['Port']

host_info['ec2_primary_cluster_id'] = node['CacheClusterId']

elif node['CurrentRole'] == 'replica':

host_info['ec2_replica_cluster_address_'+ str(replica_count)] = node['ReadEndpoint']['Address']

host_info['ec2_replica_cluster_port_'+ str(replica_count)] = node['ReadEndpoint']['Port']

host_info['ec2_replica_cluster_id_'+ str(replica_count)] = node['CacheClusterId']

replica_count += 1

# Target: Redis Replication Groups

if key == 'ec2_member_clusters' and value:

host_info['ec2_member_clusters'] = ','.join([str(i) for i in value])

# Target: All Cache Clusters

elif key == 'ec2_cache_parameter_group':

host_info["ec2_cache_node_ids_to_reboot"] = ','.join([str(i) for i in value['CacheNodeIdsToReboot']])

host_info['ec2_cache_parameter_group_name'] = value['CacheParameterGroupName']

host_info['ec2_cache_parameter_apply_status'] = value['ParameterApplyStatus']

# Target: Almost everything

elif key == 'ec2_security_groups':

# Skip if SecurityGroups is None

# (it is possible to have the key defined but no value in it).

if value is not None:

sg_ids = []

for sg in value:

sg_ids.append(sg['SecurityGroupId'])

host_info["ec2_security_group_ids"] = ','.join([str(i) for i in sg_ids])

# Target: Everything

# Preserve booleans and integers

elif type(value) in [int, bool]:

host_info[key] = value

# Target: Everything

# Sanitize string values

elif isinstance(value, six.string_types):

host_info[key] = value.strip()

# Target: Everything

# Replace None by an empty string

elif type(value) == type(None):

host_info[key] = ''

else:

# Remove non-processed complex types

pass

return host_info

def get_host_info(self):

''' Get variables about a specific host '''

if len(self.index) == 0:

# Need to load index from cache

self.load_index_from_cache()

if not self.args.host in self.index:

# try updating the cache

self.do_api_calls_update_cache()

if not self.args.host in self.index:

# host might not exist anymore

return self.json_format_dict({}, True)

(region, instance_id) = self.index[self.args.host]

instance = self.get_instance(region, instance_id)

return self.json_format_dict(self.get_host_info_dict_from_instance(instance), True)

def push(self, my_dict, key, element):

''' Push an element onto an array that may not have been defined in

the dict '''

group_info = my_dict.setdefault(key, [])

if isinstance(group_info, dict):

host_list = group_info.setdefault('hosts', [])

host_list.append(element)

else:

group_info.append(element)

def push_group(self, my_dict, key, element):

''' Push a group as a child of another group. '''

parent_group = my_dict.setdefault(key, {})

if not isinstance(parent_group, dict):

parent_group = my_dict[key] = {'hosts': parent_group}

child_groups = parent_group.setdefault('children', [])

if element not in child_groups:

child_groups.append(element)

def get_inventory_from_cache(self):

''' Reads the inventory from the cache file and returns it as a JSON

object '''

cache = open(self.cache_path_cache, 'r')

json_inventory = cache.read()

return json_inventory

def load_index_from_cache(self):

''' Reads the index from the cache file sets self.index '''

cache = open(self.cache_path_index, 'r')

json_index = cache.read()

self.index = json.loads(json_index)

def write_to_cache(self, data, filename):

''' Writes data in JSON format to a file '''

json_data = self.json_format_dict(data, True)

cache = open(filename, 'w')

cache.write(json_data)

cache.close()

def uncammelize(self, key):

temp = re.sub('(.)([A-Z][a-z]+)', r'\1_\2', key)

return re.sub('([a-z0-9])([A-Z])', r'\1_\2', temp).lower()

def to_safe(self, word):

''' Converts 'bad' characters in a string to underscores so they can be used as Ansible groups '''

regex = "[^A-Za-z0-9\_"

if not self.replace_dash_in_groups:

regex += "\-"

return re.sub(regex + "]", "_", word)

def json_format_dict(self, data, pretty=False):

''' Converts a dict to a JSON object and dumps it as a formatted

string '''

if pretty:

return json.dumps(data, sort_keys=True, indent=2)

else:

return json.dumps(data)

# Run the script

Ec2Inventory()

=============================================

./ec2.py --list

===========================

awscli

===========================

web conslole , create iam user grp with--> AmazonEC2FullAccess

add user to group and tick Programmatic access (API calls)

download csv (sec id and and key)

yum install epel-release -y

yum install python-pip -y

pip list

yum install awscli botocore -y

aws

aws configure

vi ~/.aws/credentials

[default]

aws_access_key_id = AKIAIZxxxxJ4L3P4A

aws_secret_access_key = I6xxxxxxxxxxxxxxxxxxxxx0UKN7Az

vi ~/.aws/config

[default]

output = text

region = us-east-1

aws ec2 describe-regions

aws ec2 describe-instances

aws ec2 describe-instances --output table

aws iam list-access-keys (for this, you will need add administrative access to sec group, )

<command> <service> .....

aws rds create-db-snapshot --db-snapshot-identifier postgresqlsnap --db-instance-identifier postgresql

aws rds delete-db-snapshot --db-snapshot-identifier postgresqlsnap

====================================

ROUTE 53 TEST RESULTS

A aaa.hasarangaprasad.tk > dualstack.test-675364060.us-west-2.elb.amazonaws.com ALIAS=yes === working

CNAME bbb.hasarangaprasad.tk > dualstack.test-675364060.us-west-2.elb.amazonaws.com ALIAS=yes === NOT working

CNAME ccc.hasarangaprasad.tk > dualstack.test-675364060.us-west-2.elb.amazonaws.com ALIAS=no === working

A hasarangaprasad.tk > dualstack.test-675364060.us-west-2.elb.amazonaws.com ALIAS=yes === working

CNAME hasarangaprasad.tk > dualstack.test-675364060.us-west-2.elb.amazonaws.com ALIAS=yes === NOT allowed

CNAME hasarangaprasad.tk > dualstack.test-675364060.us-west-2.elb.amazonaws.com ALIAS=no === NOT allowed

Thank you for your information.it is very nice article.

ReplyDeleteDigital Marketing Training in Pune

It's a very awesome Blog! Thanks a lot for sharing information.

ReplyDeleteDevOps Training

DevOps Online Training